For CTOs, engineering managers, and technical leads across Australia, the pressure to deliver secure, high-quality software at speed has never been higher. Modern development isn’t just fast; it’s continuous. This acceleration has pushed the practice of code review, the critical quality gate, to its absolute limits. The old, heavy-handed processes simply clog the CI/CD pipeline.

This brings us to the fundamental strategic choice facing every serious development team today: manual vs. automated code review.

The debate isn’t simply about preferring human insight over machine logic; it’s about optimising strategy, managing operational costs, and preventing developer burnout. It’s about leveraging human intelligence where it matters most and relying on machine speed for everything else. This deep-dive analysis will cut through the noise to show you which approach delivers better results, and why a strategic hybrid model is the only way forward.

We will specifically address the critical question of what delivers better code quality manual or automated review by examining the strengths and weaknesses of both methods, ultimately helping you optimise your engineering workflow.

The Indispensable Value of Human Insight

The foundational practice of manual review, the inspection of source code by developers other than the author, remains an invaluable and irreplaceable component of software quality assurance.

The core advantage of the human reviewer is context awareness. A developer looking at a pull request doesn’t just see lines of code; they understand the intent behind the changes, the architectural implications, and the business logic the code is meant to satisfy. They understand why the code was written in a certain way, allowing them to detect subtle issues that simple rules-based automation cannot perceive.

Architectural and Business Logic Validation

Automation excels at enforcing predefined rules, but it lacks the capacity for critical thinking or design judgment. Manual reviews are essential for evaluating the high-level aspects of the codebase.

For example, when implementing greenfield features or complex system integrations, a human reviewer ensures the proposed solution aligns with the overall system design and meets specific business requirements. This is where human reasoning is crucial; it’s needed to validate non-rule-based dimensions. We must ensure that the proposed solution aligns with crucial user experience principles, which often starts with sound UI/UX design and architectural decisions.

Furthermore, certain security risks are inherently difficult to spot because they depend on nuanced logic, context-specific flows, or specific design decisions within the application. Analysing these threats requires observing the application design from an attacker’s perspective to uncover potential backdoors, a process achieved most reliably through manual analysis and discussions with the technical architect’s team.

Knowledge Sharing and Team Resilience

Beyond defect finding, manual review is a powerful engine for collaboration and knowledge sharing. It serves as a primary channel for mentorship, allowing experienced developers to guide junior team members and ensure that code practices and domain knowledge are effectively transferred across the team. This enhances the overall skill level and resilience of the organisation, cementing the human reviewer’s role as a mechanism for institutional learning and software design maturation.

The Critical Limitations of Relying on Manual Review

Despite these undeniable contributions, reliance on manual code review as the primary defense mechanism introduces severe limitations concerning cost, consistency, and scalability, making it incompatible with large-scale, high-velocity development. The limitations of the traditional manual vs. automated code review structure become clear when you look at the sheer volume of code changes in a modern environment.

Resource Cost and Consistency

Manual review is inherently resource and time intensive. Reviewing each line manually can take days or weeks for large codebases, incurring significant costs associated with hours of skilled labor. The financial implication is that manual review costs scale linearly with the volume of code changes, creating an unsustainable model for growth.

Consistency is also a major drawback of human-dependent processes. Unlike automated tools, human reviewers are subject to fatigue, distraction, and inconsistency, which can cause them to overlook critical details or vulnerabilities, especially when reviewing long or complex code sections. This risk of human error impacts the reliability of detection, particularly for fast-moving projects like mobile app development.

The Cost in Human Capital

The consequences of inefficient manual processes are felt far beyond deadlines. Code review delays are a well-documented source of significant bottlenecks in engineering workflows. When workflows are scattered and pull requests (PRs) languish awaiting attention, the team’s momentum is lost. GitLab’s survey identified code review delays as the third biggest reason developers feel fatigued, following long work hours and context switching. This correlation between reliance on inefficient manual review and elevated developer burnout reveals that the true cost of process inefficiency is often paid in human capital and employee retention.

Quantifying the Bottleneck: Code Review Efficiency Metrics

To effectively manage this risk, technical leadership must monitor specific code review efficiency metrics that translate quality assurance processes into quantifiable business language. These include:

- Review Time to Merge (RTTM): This measures the duration from the start of the review process to the code merge. Aggressively minimising a high RTTM is essential, as it indicates a systemic bottleneck.

- Reviewer Load: This tracks the number of open pull requests assigned to each reviewer. Monitoring reviewer load ensures balanced resource optimisation and prevents single points of failure.

- Code Ownership Health: This metric ensures that the codebase is adequately covered by designated domain experts who are best equipped to review relevant and often sensitive sections of the code.

When these metrics flag poor performance, it is typically a symptom of asking expensive human reviewers to perform repetitive, low-value checks, tasks that are executed slowly and inconsistently by people. The relentless pursuit of minimizing RTTM and balancing reviewer load is central to adopting a mature DevOps culture.

The Engine of Consistency: Automated Code Review

Automated code review, defined as the process of using software tools to automatically scan and evaluate source code for issues related to syntax, security, and violation of standards, represents the only viable method for maintaining quality at enterprise scale. This is why the conversation about manual vs. automated code review is shifting from ‘either/or’ to ‘how to combine them.

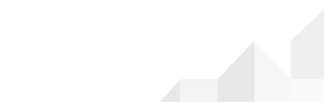

Speed, Consistency, and AI Enhancement

Automated tools deliver consistency and speed unparalleled by human teams.

- Unmatched Efficiency: Tools can analyse thousands of lines of code in seconds, plugging directly into continuous integration (CI) pipelines to offer immediate feedback.

- Reliable Governance: They ensure consistent checks against style guides, detect syntax errors, and enforce coding standards reliably, as tools do not get tired or distracted. This repeatability is fundamental for enterprise-level governance.

- Advanced AI Augmentation: Modern solutions are rapidly moving beyond rudimentary static analysis. AI-based tools now leverage models to offer intelligent, context-aware feedback. Tools like CodeRabbit aim to function like an experienced reviewer, flagging logic gaps, inconsistent behaviour, or potential edge-case failures.AI assistants can autonomously identify issues ranging from readability concerns, logic bugs, and common code smell to best practice deviations. The most significant immediate application is the augmentation of the human reviewer. AI assistants can autonomously identify issues, dramatically reducing the time human reviewers spend on initial evaluations.

This blurring of the boundary between manual and automated review forces human expertise to shift focus entirely to the highest-level domains: system integration validation, risk assessment, and architectural integrity.

Strategic Synthesis: The Hybrid Code Review Model

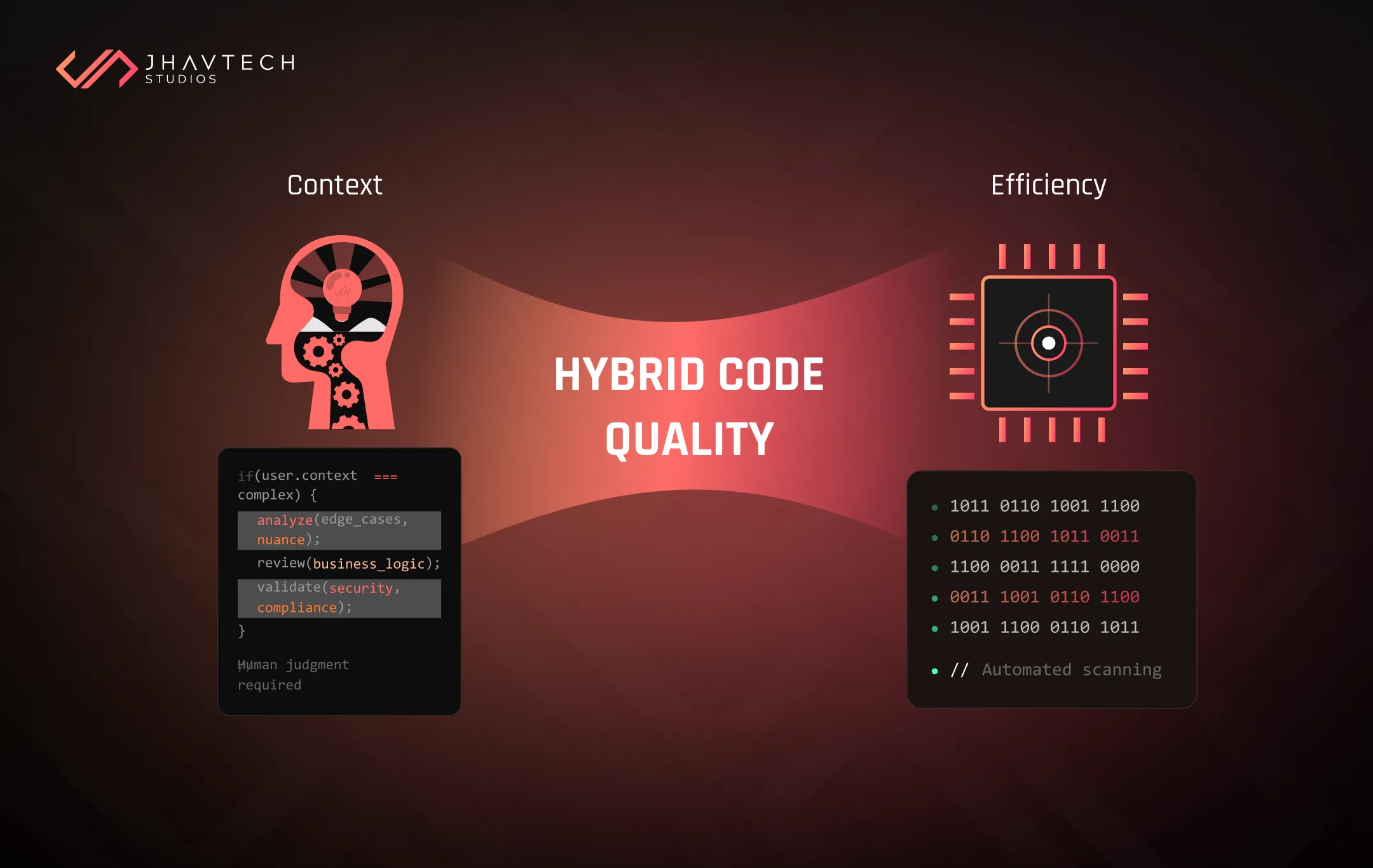

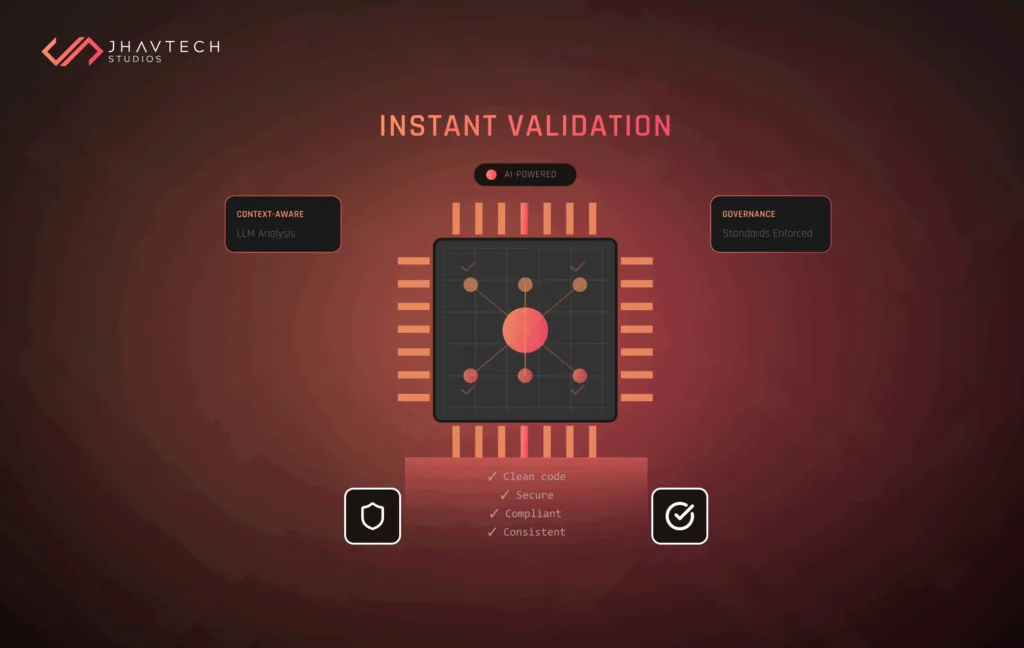

When evaluating what delivers better code quality manual or automated review, the determination depends entirely on the criteria. Automated review is superior for speed, consistency, and scalability; manual review is superior for architectural integrity, design, and knowledge sharing.

Therefore, the most effective approach is not a choice between the two, but a strategic orchestration of their strengths: the Hybrid Code Review Model. The question is not whether to choose manual vs. automated code review, but how to strategically orchestrate their strengths.

The Imperative of Combination: Depth Meets Breadth

Superior results are achieved when automated review provides breadth and consistency, covering the entire codebase and enforcing policy, while manual review provides essential depth and context, focusing only on the highest-value, most complex tasks.

This philosophy dictates an automation first workflow. Teams must begin with an automated scan to handle all repetitive or technical checks before a human reviewer is ever assigned the task. This practice clears the noise, making the subsequent human review highly efficient and targeted.

Integrating Automated Code Review Tools

Successful adoption of the hybrid model is often determined by integrating automated code review tools into the existing workflow. For high adoption rates, tools must integrate cleanly into existing ecosystems (GitHub, GitLab, Bitbucket) and leverage existing CI/CD pipelines.

The most critical factor is the accuracy of review comments. Tools that surface irrelevant or low-priority noise (false positives) often become background noise and will be ignored or abandoned by developers. For a tool to be effective, its accuracy must be high enough to consistently highlight only critical issues, not cosmetic changes.

Quantifying the Hybrid ROI: The Cost Advantage

The principal economic justification for automation in the manual vs. automated code review debate is the dramatic reduction in the reliance on expensive, specialised manual labour for repetitive, high-volume tasks. This provides massive non-linear efficiency gains, directly addressing accrued technical debt.

Consider the large enterprise scenario involving 300 developers, each generating approximately one pull request per day. If every PR requires a 15-minute manual security review, this necessitates 18,750 hours of specialised labour per year, equating to an annual labour cost of roughly $1.8 million.

Strategic automation fundamentally alters this cost structure. By implementing automated tools capable of scanning for common issues, the volume of high-risk PRs needing human security review can be reduced by as much as 90% This optimisation allows the organisation to maintain its existing specialised FTE count and slashes the associated labour costs by over 80%. Automation acts as a force multiplier, leveraging the expertise of high-cost personnel only for the most critical security and architectural analyses.

Comparison Questions Manual vs Automated Code Inspection

The goal of modern code inspection is to ensure both technical correctness and architectural soundness. The following summary table provides a clear-cut answer to the core comparison questions manual vs automated code inspection by detailing how the hybrid model achieves the optimal outcome:

Conclusion: Achieving Superior Results Through Strategy

The central inquiry, manual vs. automated code review: which delivers better results?, has a nuanced, data-driven answer. Superior results are therefore achieved exclusively through the Strategic Hybrid Model. This model delivers predictable speed and massive cost efficiencies (via automation) while simultaneously ensuring superior depth and quality assurance (via human experts). The operational advantage lies in the maximised ROI, secured by reserving high-cost human labour for the highest-risk, highest-value cognitive tasks.

For Australian businesses and organisations looking to migrate from costly, inconsistent manual processes to a highly efficient hybrid model, the transition requires specialised expertise in process governance, toolchain selection, and CI/CD integration. Achieving this strategic optimisation requires a systematic approach rooted in diagnostic assessment and expert implementation.

Stop viewing manual vs. automated code review as a conflict and start viewing it as a coordinated, highly efficient operation that delivers superior code quality, accelerates time-to-market, and frees your senior engineers to focus on innovation, not inspection. If your development process is currently characterised by high RTTM, inconsistent quality, or you are facing a software project rescue scenario, these are clear indicators that your review process requires a strategic overhaul.

Ready to Optimise Your Engineering Workflow?

Ready to transition your team to a high-velocity, high-quality development pipeline? Jhavtech Studios offers specialised IT consulting and expert implementation to help you diagnose bottlenecks, select the ideal toolchain, and integrate a robust, hybrid code review model. Don’t let review latency slow your business down.

Contact Jhavtech Studios today to schedule your free consultation and code review.

.svg)