Building AI That Earns Trust

Artificial Intelligence has gone from a futuristic concept to the core engine of modern digital transformation. From the sophisticated predictive analytics shaping supply chains to the machine learning powering healthcare diagnostics, AI is now central to business success.

But as AI becomes more powerful, one question rightly dominates every boardroom discussion, from Sydney to Melbourne to Perth:

“Can we make AI smarter without losing control, inviting regulatory penalties, or eroding customer trust?”

The answer, unequivocally, is yes. However, achieving this balance requires moving beyond abstract ‘ethics’ and implementing a concrete, verifiable responsible AI framework. This framework is not a philosophical paper; it is an actionable operational blueprint that incorporates governance, oversight, and quantifiable risk mitigation. It is the essential structure that ensures your AI systems are trustworthy, scalable, and actively uphold the principles that protect your customers and your brand.

In this comprehensive blog, we will break down exactly how your business can achieve that balance, and how Jhavtech Studios builds trustworthy, high-performance AI-powered apps that protect users, comply with global standards, and deliver measurable results.

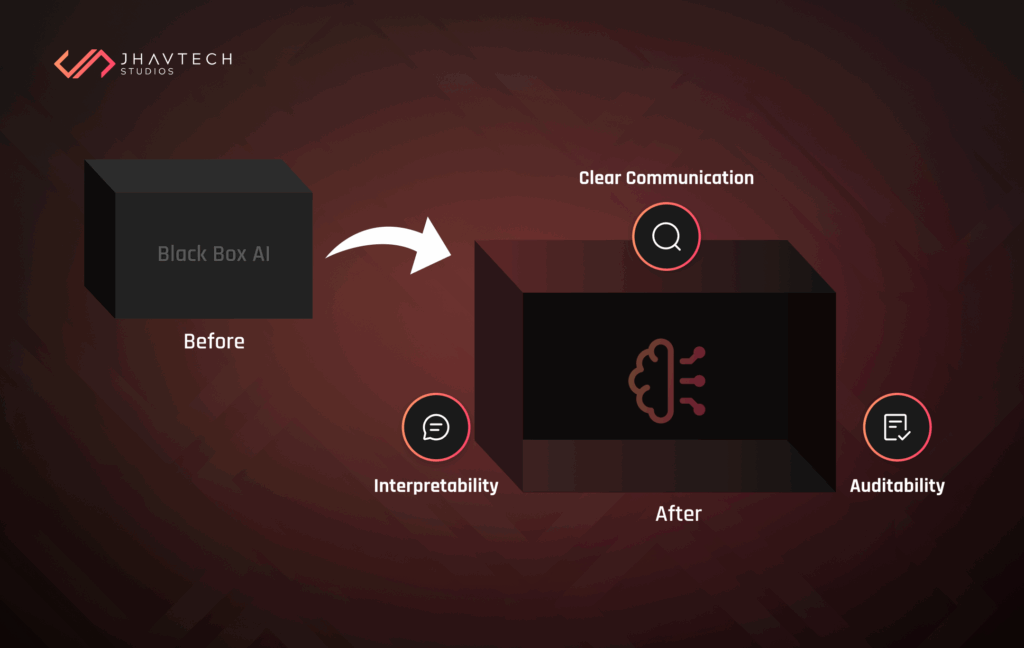

1. Transparency in AI: Opening the Black Box with Explainable AI Implementation

For too long, AI models have been referred to as “black boxes.” They take in data, they churn out a prediction or a decision, but the precise internal logic, the ‘why’, remains mysterious, even to the developers themselves. This opaqueness is a fundamental business risk. If you cannot explain why an algorithm failed, produced a biased outcome, or made a specific recommendation, your risk exposure increases exponentially.

Transparency changes that. It is the core principle that ensures users and stakeholders understand how and why an AI system behaves the way it does, building foundational trust and confidence. For a B2B audience, this means implementing technical solutions that deliver verifiable clarity.

How to Build Transparency into AI Apps

The technical term for delivering this clarity is Explainable AI Implementation (XAI). XAI is a set of processes and methods that allows human users to comprehend and trust the results created by machine learning algorithms.

- Implement Model Interpretability: XAI ensures that every decision made during the machine learning process can be traced and explained. This allows developers to ensure the system is working as expected and enables auditors to understand the underlying basis for decision-making.

- Prioritise Auditability: Systems that provide transparency are, by nature, auditable. This means maintaining clear audit trails, robust model documentation (sometimes referred to as “Model Cards”), and detailed logs of data sources and updates for compliance and quality checks.

- Focus on Clear Communication: Being transparent also requires communication. You must clearly define and communicate the types of data included and excluded from AI models, providing reasoning behind the selection to help users understand the model’s limitations and capabilities.

At Jhavtech Studios, we embed XAI principles from the UI/UX design stage. We apply rigorous model interpretability frameworks and data lineage tools so that every AI decision can be traced, reviewed, and justified, both technically and ethically.

2. Ethical AI: Designing Fair and Responsible AI Framework Systems

The conversation around ethics in AI has shifted dramatically. It is no longer optional; it is an essential operational requirement. Unchecked bias in algorithms can lead to unfair, discriminatory, or misleading results, severely damaging user trust and leading to significant legal liability. Furthermore, 65 percent of customer experience leaders now view AI not as a fad, but as a strategic necessity, making fairness a critical requirement for market adoption.

To ensure equitable treatment, organisations must establish a comprehensive and mandated responsible AI framework. This is the necessary governance layer that moves beyond good intentions to define clear, consistent rules and procedures for managing AI systems.

Common Ethical Issues in AI

The most immediate threat is bias, which frequently stems from two sources:

- Data Bias: When training data does not represent all user demographics equitably.

- Algorithm Bias: When algorithms amplify stereotypes or misinformation, often resulting in unequal treatment of different groups or individuals.

Best practices for preventing AI bias in application development

Eliminating AI bias is critical to ensuring systems treat different groups or individuals equitably. This requires a dedicated three-phase approach integrated directly into the development lifecycle:

- Data Preparation and Pre-processing: Prevention starts with ensuring diversity and inclusion in data sources. Organisations must pre-process data to identify and remove biases, ensuring the data used for training is diverse, high-quality, and representative of the intended user groups.

- Model Testing and Constraints: Fairness constraints must be applied during model testing. This includes using rigorous fairness evaluation tests to spot and address disparities in algorithmic outputs before deployment.

- Post-processing and Auditing: Conduct continuous and regular assessments to identify and eliminate biases within the AI software. Maintain detailed records of bias detection, evaluation, and remediation processes to demonstrate a commitment to customer transparency and verifiable bias prevention.

The responsible AI framework serves as the overarching structure for these practices, ensuring that core principles like fairness, reliability, and accountability are operationalised and documented.

3. Security: The Foundation of Safe AI Apps and AI Risk Mitigation and Governance

AI applications rely on vast amounts of sensitive data, from personal preferences to healthcare records. Without proper protection, this data is a prime target for cyberattacks, data breaches, or misuse. Security is not a feature you bolt on later; it must be ingrained through a structural approach to AI risk mitigation and governance.

AI governance ensures the ethical, secure, and compliant use of AI across the enterprise. By adopting a robust responsible AI framework, your organisation is proactively managing regulatory, compliance, security, and reputational risks associated with production AI. This is particularly relevant in Australia where data protection and privacy are top-of-mind for both consumers and regulators.

Core Governance Areas for AI Security

Effective AI risk mitigation and governance defines and documents clear processes for critical areas :

- Data Privacy and Security: Implement robust data security and privacy standards to mitigate risks from data breaches and cyberattacks. This involves safeguarding sensitive information (e.g., through data anonymisation) during both training and inference processes. A powerful and rigorous technique for achieving this is Differential Privacy (DP), a mathematical framework that quantifies and minimizes the risk that an individual’s confidential data may be learned from a released analysis.

- Asset Management and Third-Party Risk: Maintaining inventory and control over AI models and datasets is crucial. Governance also extends to managing the security risks associated with open-source AI components and vendor solutions.

- Compliance Standards: Ensuring alignment with regulatory requirements and industry frameworks is paramount. Your framework must define policies that guarantee compliance with legal standards, reducing the risk of violations and enhancing trust.

When you implement a responsible AI framework, you are formalising the controls necessary to ensure your systems remain robust, reliable, and secure, minimising the risk of unintended consequences and providing the auditability regulators demand.

Jhavtech’s Security Commitment

We integrate DevSecOps into every AI project, ensuring continuous security monitoring from development to deployment. Our AI apps comply with GDPR, HIPAA, and ISO 27001 standards, especially crucial for industries like Healthcare and Wellness.

We also offer a Free Code Review to identify potential security loopholes before scaling your app.

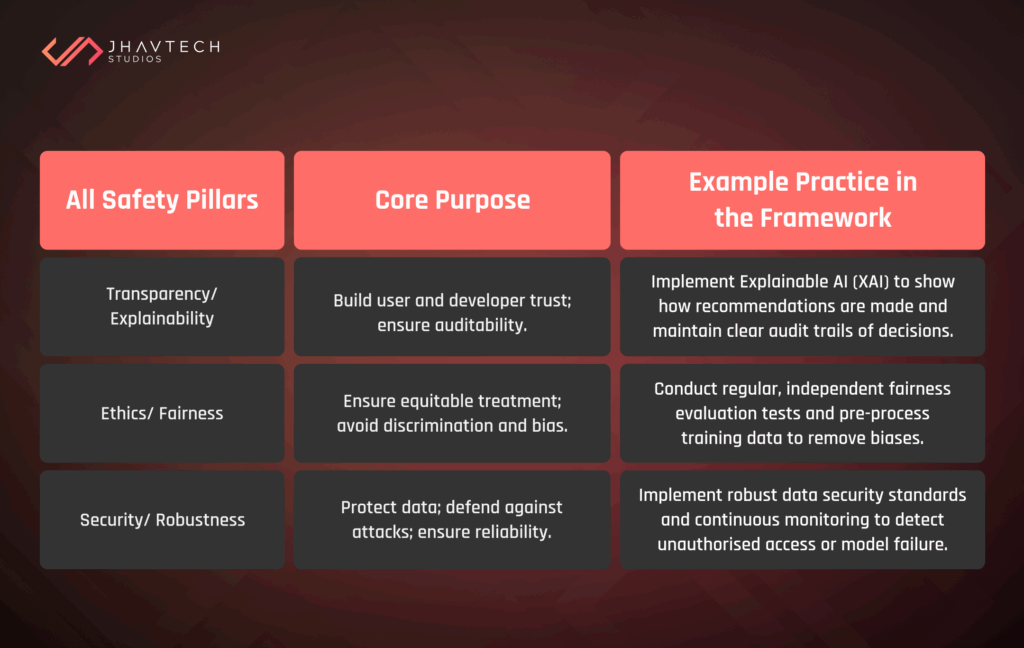

4. Combining Transparency, Ethics, and Security for Real Impact

The key insight for business leaders is that AI safety is not achieved by focusing on one factor alone. The most trustworthy and scalable AI solutions integrate transparency, ethics, and security as part of their DNA. These three pillars are unified and enforced by the overarching responsible AI framework.

Here is how these core elements work together to create a trustworthy system:

By aligning these three pillars within a single, consistent responsible AI framework, businesses can deliver AI apps that are not only powerful but institutionally trustworthy. This structured approach helps alleviate uncertainties related to AI deployment by providing visibility into the underlying processes, ensuring data is reliable and accurate.

5. Why Businesses Must Prioritise Ethical AI in 2026

The World Economic Forum predicts that over 80% of enterprise software will have AI features within the next few years. In this competitive landscape, trust is the real differentiator. The brands that will lead the next generation of digital innovation are those that answer key user and regulator questions before being asked:

- How is their data being used?

- Can they trust the AI’s decisions?

- What happens when something goes wrong?

The business case for adopting a responsible AI framework is no longer just about compliance; it is about competitive advantage and risk mitigation. General principles of “ethics” are insufficient to meet regulatory demands; you need a documented framework that provides clear accountability. The cost of getting it wrong, through reputational damage or regulatory fines, far outweighs the cost of proactive governance. A strong framework helps secure executive sponsorship for AI programs and aligns the company’s vision with the expectations of responsible technology use.

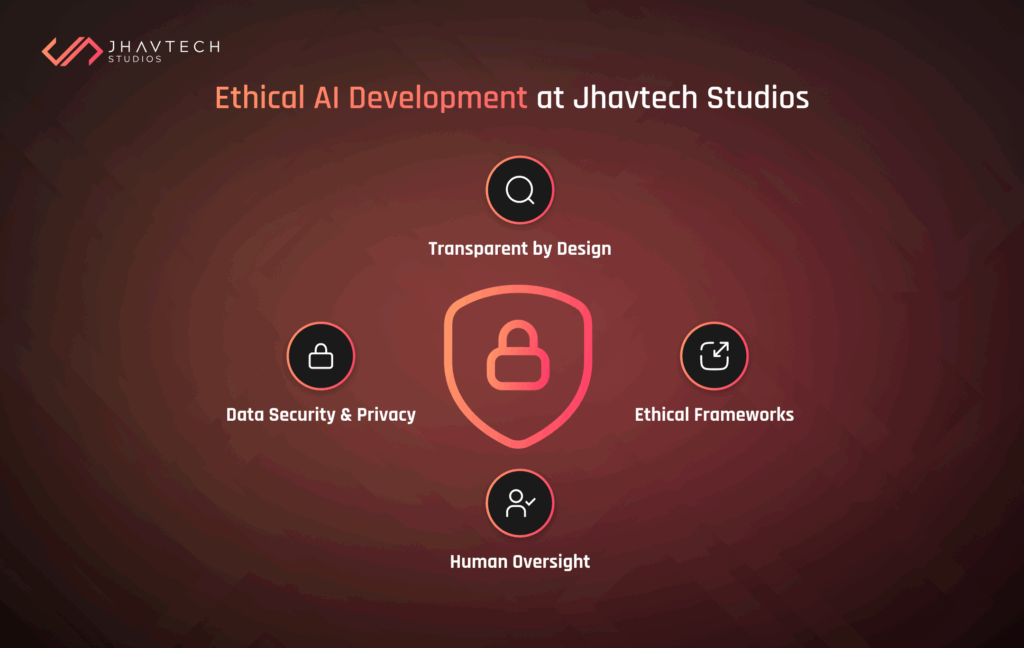

6. How Jhavtech Studios Leads in Ethical AI Development

At Jhavtech Studios, we do not just develop AI apps; we build AI systems you can explain, trust, and scale efficiently. Our approach is founded on the principles of a comprehensive responsible AI framework that covers the entire AI lifecycle. We blend award-winning development practices with rigorous governance protocols to ensure maximum reliability and compliance.

Here is what sets our AI app development apart:

- Transparent by Design: Every AI model we build is auditable and explainable. We implement XAI tools to ensure model transparency and traceability, giving you confidence in every decision.

- Ethical Frameworks: We adopt fairness-first AI principles across all projects. Our teams apply the best practices for preventing AI bias in application development, using diverse data sources and running continuous fairness checks.

- Data Security and Privacy: We follow best-in-class encryption and compliance protocols, aligning with global standards like ISO 27001 and GDPR, which is especially critical for data-sensitive sectors like Healthcare and Finance.

- Human Oversight and Accountability: Our developers and governance teams monitor, test, and refine AI outcomes continuously, ensuring that all AI decisions are subject to appropriate human judgment when necessary.

From idea to deployment, our team ensures every AI feature supports both business goals and the highest ethical standards, providing you with a scalable, defensible AI solution.

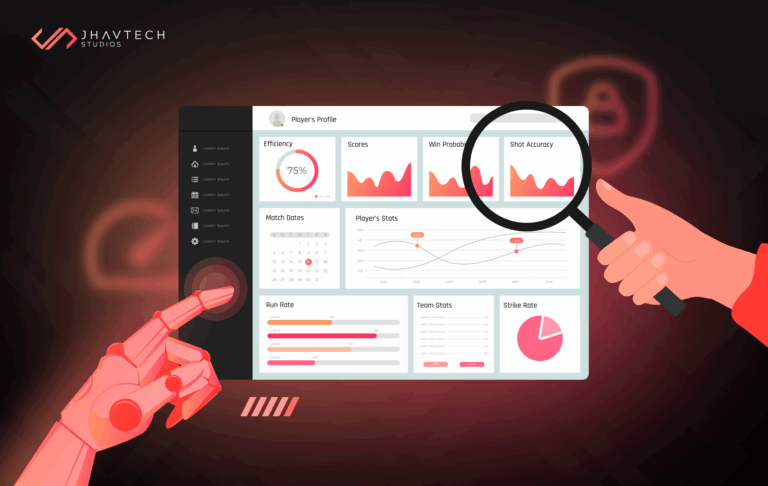

7. Futureproofing Your AI App: How to Establish Continuous Monitoring for AI Model Drift and Performance

Building an AI app is not a one-time project; it is about establishing a continuously evolving ecosystem. The initial implementation of your responsible AI framework is only the first step; the subsequent challenge lies in maintaining its effectiveness.

Even the fairest and most transparent model can degrade or “drift” over time. As real-world data changes, the model’s performance may become unreliable or even non-compliant with its initial ethical settings. If a deployed model violates regulatory principles months after launch, your organisation remains liable.

This is why understanding how to establish continuous monitoring for AI model drift and performance is a mandatory operational requirement. AI governance is an evolutionary process that must adapt as AI scales and operations grow.

Key Monitoring Practices:

- Continuous Validation: Implement continuous monitoring of AI models in production to detect and remediate drifts and performance degradation, which helps to ensure the continued reliability of AI outcomes.

- Auditability and Feedback Loops: This monitoring must be integrated with audit processes to maintain clear documentation and ensure adherence to established policies. Regular audits of AI systems ensure adherence to established policies and identify areas for improvement.

- Measure Key Metrics: Companies need to define and track the right AI governance metrics across key areas, including compliance, system performance and outcomes, risk management, and ethical implications.

At Jhavtech, we offer ongoing post-launch AI audits and security updates to ensure your app stays compliant with emerging standards and threats, proactively addressing AI risks before they turn into costly problems.

Conclusion: Trust Is the Real Competitive Advantage

AI can automate, predict, and personalise, but it cannot replace trust. When your users, stakeholders, and regulators feel safe, they stay loyal and supportive.

By choosing Jhavtech Studios as your AI app development partner, you are not just adopting cutting-edge technology; you are establishing a verifiable, scalable, and responsible AI Framework that serves as the foundation for ethical innovation and long-term business growth. We help you move from ethical ambition to operational reality.

Ready to create an AI app that is transparent, ethical, and secure? Contact Jhavtech Studios today to start your AI journey.

Frequently Asked Questions

1. What is AI transparency?

AI transparency, often achieved through explainable AI implementation, means users and developers can understand how an AI makes decisions, improving accountability and trust. It involves documenting how data is selected and how the model arrives at its outputs.

2. How do you ensure AI ethics in app development?

By adopting a formal responsible AI framework that mandates the use of fairness constraints, rigorous testing for bias, using inclusive datasets, and aligning development with global ethical standards to ensure equitable outcomes.

3. Can AI apps be fully secure?

Yes, when developed under a strict AI risk mitigation and governance policy. This involves using end-to-end encryption, implementing robust data security standards, and performing continuous monitoring to defend the system from adversarial attacks and ensure ongoing compliance.

.svg)