⚡ Key Takeaway: AI-written code often fails at scale because Large Language Models (LLMs) lack architectural awareness and long-term maintainability. While generative AI excels at syntax and boilerplate, it frequently introduces hidden technical debt and non-deterministic bugs. Enterprise-grade stability requires Human-in-the-Loop AI Engineering to ensure systemic integrity, security compliance, and performance optimization in production environments.

The Illusion of Efficiency: Why “Vibe Coding” Fails the Enterprise

The rise of “vibe coding” or the practice of prompting an AI until the code “looks” right, has revolutionised rapid prototyping. However, there is a massive chasm between a functioning script and a scalable production system. In the fast-paced world of app development, shipping code that merely “works” on a local machine is a recipe for disaster when that code hits a live environment. When code is generated in a vacuum, it lacks the foundational engineering intuition required to survive high-concurrency environments.

In 2026, the industry is moving away from pure automation. We are seeing a shift where the value is no longer in the generation of code, but in the architectural auditing of that code. Without a professional audit, AI-generated snippets act like “black boxes” that work in isolation but collapse when integrated into complex, legacy, or high-traffic infrastructures. This is why many organisations are turning to specialised IT services to bridge the gap between AI-generated drafts and production-ready systems.

The Three Pillars of Failure in AI-Generated Codebases

To understand why AI-written code breaks, we must look at the three primary areas where LLMs struggle: structural context, state management, and edge-case anticipation. In an era where speed is often mistaken for progress, these pillars represent the structural fault lines where a seemingly perfect prompt-to-code workflow inevitably shatters under the weight of enterprise demands.

1. Lack of Global Architectural Awareness

An AI model processes code in “tokens” and “windows”. It sees the immediate function it is writing but often forgets the broader system architecture. This leads to:

- Redundant Logic: Multiple AI-generated modules performing the same task in slightly different ways.

- Incompatible Dependencies: Using library versions that conflict with the global project manifest.

- Circular Dependencies: Creating logic loops that are difficult to debug during runtime.

2. The Non-Deterministic Nature of LLMs

Standard software engineering is deterministic, wherein input A always produces output B. AI is probabilistic. If an AI generates a core component of your database logic, it might use a pattern that works 90% of the time but fails under specific race conditions or high-latency scenarios.

Without human-led stress testing, these failures only appear once the product is live. A rigorous code review is the only way to catch these probabilistic errors before they impact the end user. A human reviewer understands the “why” behind the code, whereas an AI only understands the “likely next token”.

3. The “Last Mile” Security Gap

AI models are trained on public repositories, which unfortunately include insecure coding patterns. AI often prioritizes “functional” code over “secure” code. This creates vulnerabilities like:

- Insecure API endpoints that lack proper authorisation.

- Hardcoded credentials left in plain sight.

- Lack of proper input sanitization, leaving the system open to injection attacks.

Human-in-the-Loop AI Engineering: The 2026 Standard

To mitigate these risks, Jhavtech Studios advocates for a Human-in-the-Loop (HITL) approach. This isn’t about slowing down; it’s about “Engineering Intuition” or the ability to look at a block of AI code and recognise that while it works, it isn’t right for the specific business logic or scaling requirements. In the landscape of 2026, relying on unvetted automation is no longer a competitive advantage but a structural liability that invites systemic fragility. By centering human expertise, we transform AI from a black-box generator into a high-octane engine steered by professional architectural judgment and engineering rigor.

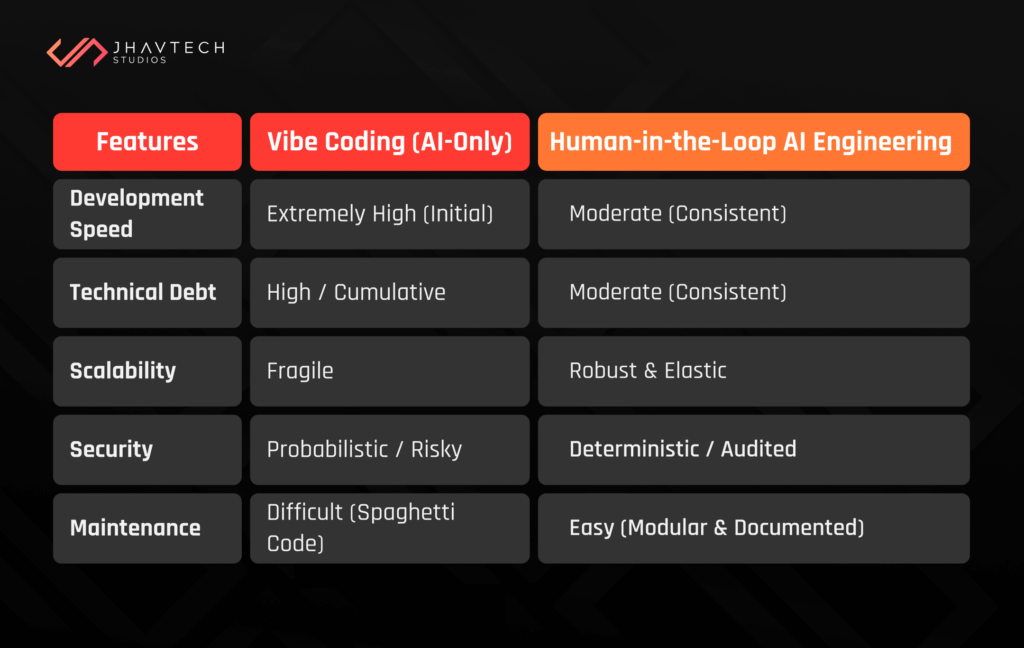

Comparison: Vibe Coding vs. Production-Ready Engineering

The following table highlights why professional oversight is essential for enterprise-grade software:

Why Scaling Changes Everything

A script that handles 10 users is fundamentally different from a system handling 10,000 concurrent requests. AI-written code often ignores Complexity Theory and Big O Notation. While a generative model can produce code that functions in a controlled development environment, it rarely accounts for the logarithmic or exponential performance degradation that occurs as data volume increases. When these unoptimised systems begin to fail under load, the resulting chaos often requires a software project rescue operation. This involves untangling layers of inefficient AI logic that have been piled on top of each other, causing the entire infrastructure to groan under its own weight.

The Hidden Costs of Algorithmic Inefficiency

Scaling is not merely about adding more server capacity; it is about how gracefully an application handles the “physics” of data processing. AI models frequently default to the most statistically common solution found in their training data rather than the most computationally efficient one.

- Inefficient Data Structures: An AI might suggest a simple array for a task that requires a hash map, leading to O(n) lookup times that paralyse a system at enterprise scale.

- Database Deadlocks: AI-generated scripts often lack the nuanced understanding of transaction isolation levels, leading to catastrophic table locks when thousands of users attempt simultaneous writes.

- Network Latency Neglect: LLMs frequently write “chatty” code that makes excessive API calls or database queries within a loop, a practice that might be unnoticeable with local data but creates massive bottlenecks in a distributed cloud environment.

Memory Leaks and Resource Exhaustion

AI often generates “greedy” code or code that consumes more memory or CPU cycles than necessary because it uses the most “common” path found in its training data, not the most “efficient” one. In high-concurrency scenarios, these minor inefficiencies do not just slow down the app; they compound until the system reaches a breaking point.

- Unmanaged Garbage Collection: AI-written logic may fail to properly close database connections or clear memory buffers, leading to “silent” memory leaks that eventually crash the server.

- Skyrocketing Infrastructure Costs: Inefficient code forces companies to “throw hardware at the problem,” leading to skyrocketing cloud infrastructure costs that could have been avoided with professional architectural oversight.

The Psychology of “Automation Bias”

There is a psychological trap known as Automation Bias, where developers become over-reliant on AI suggestions. When a developer stops questioning the “why” behind a line of code, the architectural integrity of the project begins to erode. Professional engineering requires a skeptical mindset, constantly asking, “How will this break when the database hits 1TB?”. Without this human-led interrogation, a project becomes a house of cards—functional in appearance but structurally incapable of supporting real-world growth.

Strategic SOPs for Implementing AI Code at Scale

For startups and enterprises aiming for a 2026 MVP or a full-scale rollout, we recommend the following Standard Operating Procedures (SOPs). These protocols ensure that the speed of generative AI is balanced with the stability required for enterprise app development.

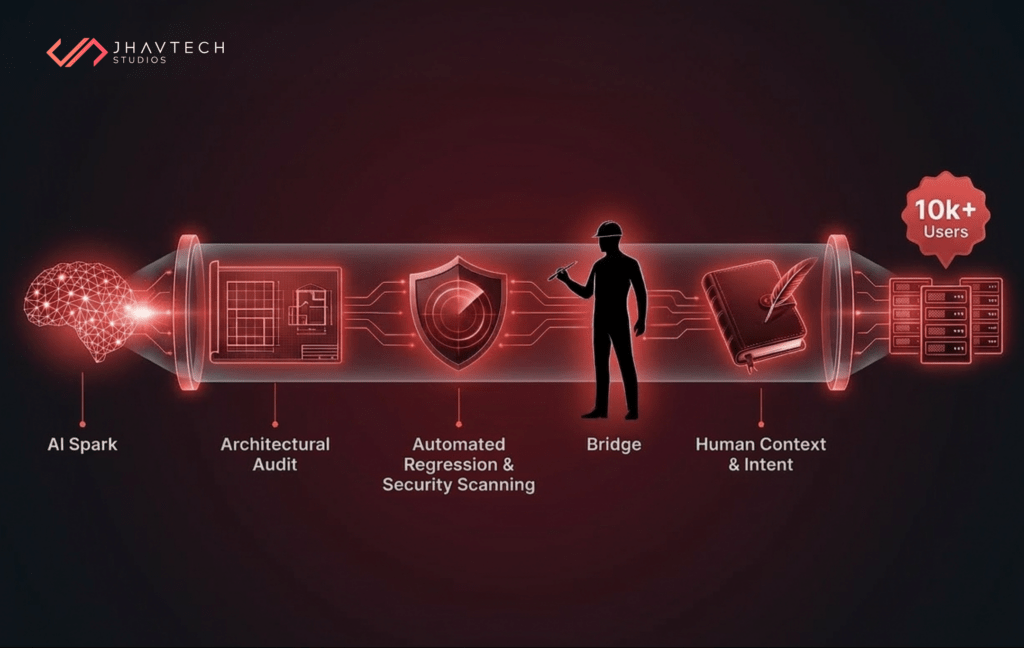

1. Mandatory Architectural Audits

Every module generated by an AI must be reviewed by a senior architect. This review isn’t just for syntax but for topical authority within the codebase, ensuring the new code aligns with the long-term roadmap and doesn’t introduce circular logic.

In a professional IT consulting framework, this audit acts as a “sanity check” against AI hallucinations. An architect evaluates the code’s “fit” within the existing ecosystem, ensuring that a new AI-generated feature doesn’t inadvertently break a legacy integration or violate global security protocols.

2. Automated Regression and AI-Specific Testing

Since AI-generated code is prone to “hallucinated” logic, your CI/CD pipeline must include rigorous regression testing. You cannot assume that because the code compiled, it is safe to deploy.

- Edge Case Testing: Specifically target inputs that an LLM might not have considered, such as null values or unexpected data formats.

- Load Testing: Simulate peak traffic to see if the AI-generated logic holds up under pressure or if it triggers a memory leak.

- Security Scanning: Integrate automated tools to scan for the “Last Mile” security gaps, such as hardcoded credentials or insecure API endpoints

3. Documentation with Human Context

AI can write documentation, but it cannot explain the intent behind a business decision. Human engineers must document the “why”—the trade-offs made during the development process that an AI wouldn’t understand.

This human-centric documentation is vital for long-term maintenance. While an AI can describe what the code does, only a human can explain why a specific optimisation was chosen over a more common (but less efficient) alternative. This context is what prevents future developers from accidentally re-introducing technical debt during a code review.

The Role of Topical Authority in Technical Content

In the age of AI search agents, simply having a “how-to” guide is not enough. To rank and provide value, technical content must demonstrate topical authority. This means moving beyond generic coding tips and providing deep, information-dense insights into the intersection of AI capability and human expertise.

By focusing on Human-in-the-Loop AI Engineering, we aren’t just writing code; we are building sustainable digital assets. This approach ensures that the app development process results in an MVP you build today that doesn’t become the technical nightmare of tomorrow.

Final Thoughts: Engineering the Future, Not Just Prompting It

AI is a powerful tool, but it is not an engineer. The “Real-World Scale” is unforgiving to shortcuts and “vibe-based” logic. To build software that lasts, companies must combine the speed of AI with the rigorous auditing and architectural intuition of seasoned human professionals.

At Jhavtech Studios, we believe the future of development is collaborative. By leveraging AI for what it does best (i.e., rapid iteration) and keeping humans in the loop for what they do best (i.e., strategic oversight), we create systems that don’t just work—they scale.

Don’t let your AI-generated momentum turn into a scaling disaster. Whether you’re scaling a new MVP or need to untangle existing technical debt, the difference between failure and growth is a professional eye.

.svg)