Summary Block: This analysis from Jhavtech Studios explores the “trust paradox” of 2026, where founder over-reliance on Generative AI for software development creates long-term technical debt and security vulnerabilities. While AI assistants increase initial velocity, they require a 3x higher “Verification Burden” from senior engineers. We define the primary risks as “Vibe Coding” (relying on high-level descriptions rather than technical requirements) and context blindness, which leads to edge-case failures. The sustainable solution for startups is a Human-in-the-Loop (HITL) framework, positioning AI as an accelerator rather than an architect.

Introduction: The 2026 Trust Paradox in Tech Startups

The pressure on startup founders in 2026 is relentless: achieve profitability faster, innovate constantly, and operate on leaner budgets. In this environment, the promise of near-autonomous, AI-driven software development is intoxicating. AI agents can now generate entire codebases, manage CI/CD pipelines, and patch bugs with minimal human intervention.

For many founders, particularly those from non-technical backgrounds, this looks like the ultimate competitive advantage: a way to replace expensive engineering teams with digital assets. However, this has created what we at Jhavtech Studios call the “2026 Trust Paradox.”

The paradox is this: while AI adoption in development is at an all-time high (a growing number of startups utilise some form of agentic AI), professional distrust in AI accuracy has simultaneously jumped to 46% (up from just 15% in 2023).

Founders are not over-trusting AI because they are naive; they are over-trusting it because the initial results are seductive, and the true cost—the “Verification Burden”—is hidden until it is too late. This document analyses why this over-reliance occurs, the specific technical risks involved, and the essential Human-in-the-Loop (HITL) protocol required for sustainable software builds.

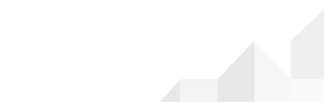

What Founders Get Right (and Wrong) About AI Velocity

It is important to validate why founders trust AI. AI is not a gimmick. In 2026, tools like Copilot X and next-generation AI agents provide unprecedented acceleration in the implementation phase of software development. As highlighted in McKinsey research on generative AI in software development, developers can complete many coding tasks significantly faster with AI assistance.

The Seductive Nature of Initial Velocity

For a founder needing a Prototype or an MVP to secure a seed round, AI is a miracle. It can take a text prompt and render a functional UI with basic CRUD (Create, Read, Update, Delete) capabilities in minutes. This immediate feedback loop creates a massive dopamine hit and an illusion of competence.

The AI feels brilliant because it rapidly resolves the simple, highly-documented problems that occupy a significant portion of early-stage development. The founder sees tangible progress (i.e. screens that load, buttons that work) and extrapolates that this same velocity will continue throughout the lifecycle of the application.

The Fallacy: “AI Code is Finished Code”

The fatal error occurs when the founder views this AI-generated code as a finished product rather than a raw material.

This is where the over-trust begins. Instead of recognising AI as an accelerator that still requires rigorous validation by a senior architect, the founder sees AI as the architect itself. They assume that if the code runs, the code is correct. As our Jhavtech Director, Rahul Jhaveri, often emphasises during client consults, “Compilable code is very different from resilient, scalable business logic.”

Why Non-Technical Founders Are Most at Risk

While all teams can fall into this trap, non-technical founders are disproportionately vulnerable. Without deep engineering experience, they often miss the nuance of why software is expensive.

The Rise of “Vibe Coding” as a Liability

In 2026, we’ve observed a trend we call “Vibe Coding.” This is when a development approach shifts from meticulous technical requirements to relying on AI agents interpreting high-level descriptions or “vibes.”

A founder might prompt, “Build a secure authentication system that integrates with Stripe.” The AI will generate code that performs those specific tasks. The founder checks it, the user flows appear correct, and the “vibe” is positive. However, they lack the technical capability to audit how the AI achieved it. Was the session management secure? Are the API keys properly obscured? Are the Stripe integration’s edge cases, like payment failures or SCA (Strong Customer Authentication) challenges, properly mapped?

“Vibe Coding” creates an environment where technical decisions are made by an LLM guessing intent, rather than an engineer applying principle.

The “If It Isn’t Broken, Don’t Pay to Fix It” Mentality

A technical leader (CTO) understands that code quality is about maintainability and security, not just current functionality. A non-technical founder often only focuses on current functionality.

When AI produces a module quickly, and a senior human engineer suggests it needs to be rewritten for performance or security reasons, the founder perceives this as unnecessary overhead. They trust the AI over their own senior team because the AI’s output costs less (initially) and appears to satisfy the requirement. This trust is built on a fundamental misunderstanding of technical debt.

The Technical Anatomy of AI Risks: Hallucination and Context Blindness

If we are to move beyond hype, we must understand the specific structural limitations of Generative AI in software development in 2026. This isn’t about AI being “bad”; it’s about understanding what it is uniquely bad at.

Hallucination: Subtle Errors at Scale

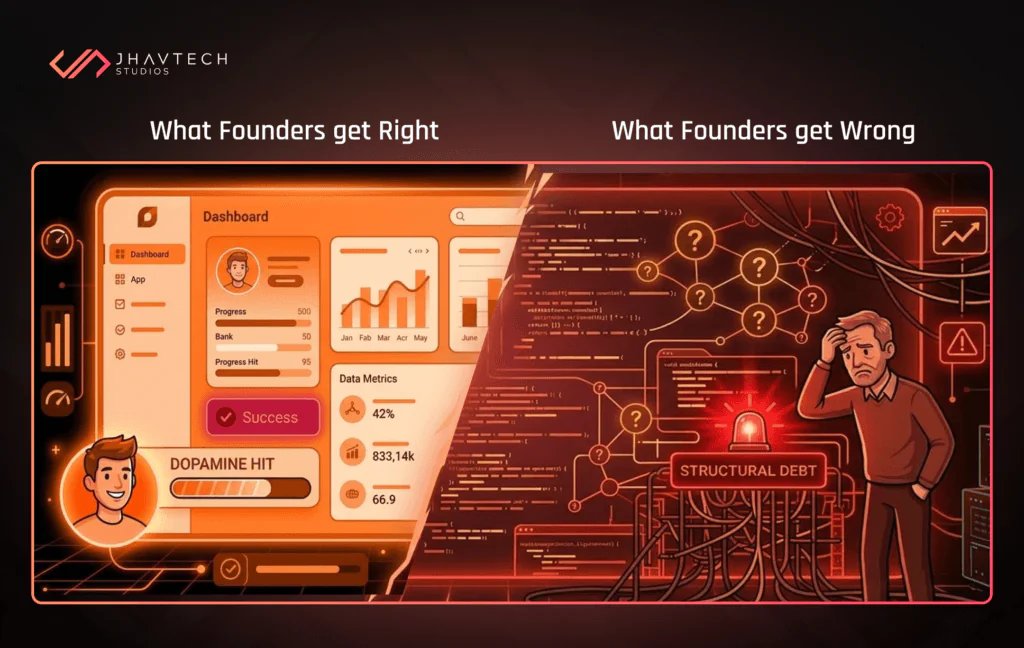

AI hallucination is no longer about generating fake facts; in 2026, it is about generating plausible-looking but subtly incorrect code.

LLMs are probability engines. If a developer uses a niche or recently updated framework (e.g., the latest version of Next.js or a specialised biometric auth SDK in mobile app development), the AI might generate code that uses deprecated methods or nonexistent properties. A junior engineer, eager to ship, might trust this output.

Context Blindness and the Failure of Edge Cases

AI assistants operate with significant context windows (some managing up to 2M tokens), but they still struggle with the complex, nested business logic of a large-scale application. They understand the code in front of them, but they struggle to understand the business.

Consider a complex marketplace application. An AI agent might successfully write a function to calculate sales tax. However, that AI may lack the context to understand that the system must also handle:

- International tax nexus (VAT, GST) based on buyer and seller location.

- Prorated refunds on partial orders with differing tax rates.

- Zero-rating rules for specific B2B client classes.

A senior human engineer architecting this system thinks in terms of these “edge cases” from day one. An AI thinks in terms of the main logic branch. The over-trust happens when the founder assumes the AI has contemplated the other 5% of complexity that inevitably breaks the system.

The “Verification Burden”: The Hidden Cost of AI Code

Perhaps the single greatest misunderstanding that leads to over-trust is ignoring the “Verification Burden.”

A dangerous myth has propagated: that AI makes senior engineers “3x more productive.” This is only half-true. While AI can generate code 3x faster, the task of reviewing, refactoring, and validating that code increases exponentially with the volume of code generated.

Based on Jhavtech’s internal project data, we’ve observed that for every hour an AI agent spends generating a complex feature, senior engineers often spend 3–4 hours on validation, testing, and refactoring. This is especially true for business-critical features where edge cases and system interactions are difficult for AI to fully anticipate.

The Shift from Junior to Senior Validation

Historically, a senior engineer mentored a junior engineer. The junior wrote slower, documented better, and asked clarifying questions. Now, a senior engineer is tasked with validating massive output from a machine that doesn’t document its reasoning and cannot explain why it chose a particular logic path.

This creates the ‘Verification Burden.’ The senior engineer is no longer just coding; they are performing technical forensic audits—a process that often evolves into a full-scale software project rescue when the initial AI-generated architecture proves too brittle for production.

Our internal data at Jhavtech shows that for every 1 hour an AI agent spends generating a complex feature, an experienced senior engineer must spend 3–4 hours in manual code review, testing, and structural refactoring. The cost (and trust) is merely deferred.

Reclaiming Control: The Jhavtech Human-in-the-Loop (HITL) Protocol

We do not advocate for abandoning AI in software development. Quite the opposite; at Jhavtech, we embrace it. However, sustainable software builds in 2026 require a shift from viewing AI as an autonomous creator to viewing it as a monitored accelerator.

The antidote to over-trust is the establishment of a robust Human-in-the-Loop (HITL) protocol. This protocol defines exactly how, when, and where a senior human expert must interject.

AI as an Accelerator, Not an Architect

The HITL protocol dictates that technical architecture must always be human-led. The senior engineering team defines the data models, the API contracts, the security protocols, and the deployment strategy.

Once the “architectural skeleton” is defined, AI agents are then deployed to write the implementation of the specific, small functions within that framework. The AI “fills in the blanks” that the human architect has set up. This ensures the foundational logic is sound and not subject to Vibe Coding.

Implementation Guide: The Mandatory Validation Funnel

To counteract over-trust, we implement a mandatory “Validation Funnel” for all AI-generated code. AI code is quarantined and must pass through several stages before deployment:

- AI Static Analysis: Automated tools (which may themselves be AI-driven) check for obvious syntactical errors, deprecated calls, and security CVEs (Common Vulnerabilities and Exposures).

- Modular Unit Testing: AI agents are used to generate unit tests against the logic they just wrote. These tests must be validated by a human.

- Human Code Review (The Gatekeeper): This is the mandatory HITL. A senior developer (never the prompt-user) must line-item review the logic. They must explicitly look for context failures, hard-coded secrets, and inefficient complexity.

Final Thoughts: The Mature View of AI in Software

The promise of AI in software development is real, but the hype cycle is dangerous. Founders over-trust AI not because the technology is flawed, but because the narrative surrounding its capabilities in 2026 is often oversold.

Real technical authority does not come from generating code quickly; it comes from understanding the systems, the security implications, and the business logic that the code serves. For Jhavtech Studios, and the founders we partner with, the future of development is neither solely human nor solely AI. It is the human intelligence that defines the protocol and the artificial intelligence that executes it. By trusting the process rather than the prompt, founders can build resilient, scalable platforms that stand the test of time. By trusting the process rather than the prompt, founders can build resilient, scalable platforms that stand the test of time.

Audit Your AI Strategy. Don’t let the “initial velocity” of AI create a hidden verification burden for your team. Whether you are architecting a new build or require a software project rescue for an AI-generated codebase, our senior engineering team is ready to help you implement a sustainable HITL protocol.

.svg)